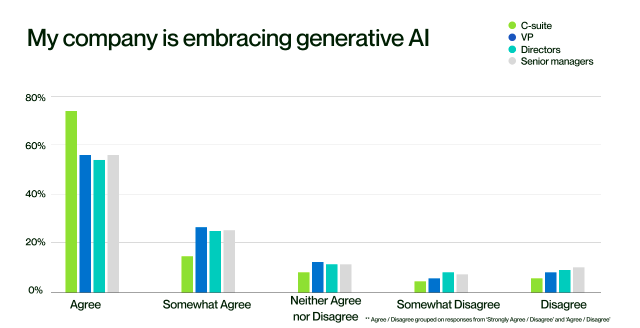

Upwork ran a survey last year on companies embracing Gen AI. 73% of c-suite exec believe their company are embracing it. Only 53% of senior managers agree.

The survey didn’t cover levels below this - but it’s a safe bet where that pattern is going.

This is partly explained by the leadership iceberg model.

But it’s also down to a fundamental difference in Gen AI from the technology most companies are used to.

Most technology is rule-based. It’s predictable. It’s designed and built to do a certain thing.

If it doesn’t, it’s a bug. And bugs can be found and fixed.

Gen AI isn’t like that.

It’s much more chaotic.

Ask the same fairly simple thing 10 times and you might get 5-6 variants of the answer.

Ask something complex 10 times and you’ll get 10 different variants.

Of course, there are things you can learn to get some more control over parts of this.

But you can’t just configure it, teach someone how to use it, support them to adopt it and then get the outputs you expect.

For just about every use case, adopting Gen AI means experimenting with it for that use case. Trying hundreds of variations of what you ask, how you ask, what data you use and don’t use, what output you want, etc.

Sometimes you’ll get to what you want and start to realise some real benefits. Sometimes you won’t. You won’t necessarily know before you start.

And you can’t just overcome this with comms, training and reinforcement.

It needs the time to experiment. Go through each trial and error iteration until it does the job. And then mechanisms to facilitate the sharing of that learning onwards.

That’s the reality of embracing Gen AI at the moment: time, trial and error and unpredictable outcomes.

The sooner leaders accept that reality, the sooner that perception iceberg can start to melt.